Weina Jin

Trying hard to make AI explainable to clinical users

Hi! I am Weina, a PhD student at Dr. Hamarneh’s Medical Image Analysis Lab, Computing Science, Simon Fraser University.

My research is on developing end-user-centered interpretable AI (artificial intelligence), and how to use it to augment doctors’ clinical decision making based on medical image tasks. I’m especially interested in using explanations for better learning, for both AI (enable AI to learn better by forcing explicit representation) and doctors (learn from those explicit representations to accumulate experience from big clinical data).

Previously, I received my Doctor of Medicine (MD) degree from Peking University, and underwent residency training in Neurology. I had worked in the hospital, pharmaceutical and tech company. Now in addtion to my research in training and making sense of artificial intelligence, in my life I am engaged in training and understanding natural intelligence, and writing science fictions.

My research contributions to human-centred AI and its explanation

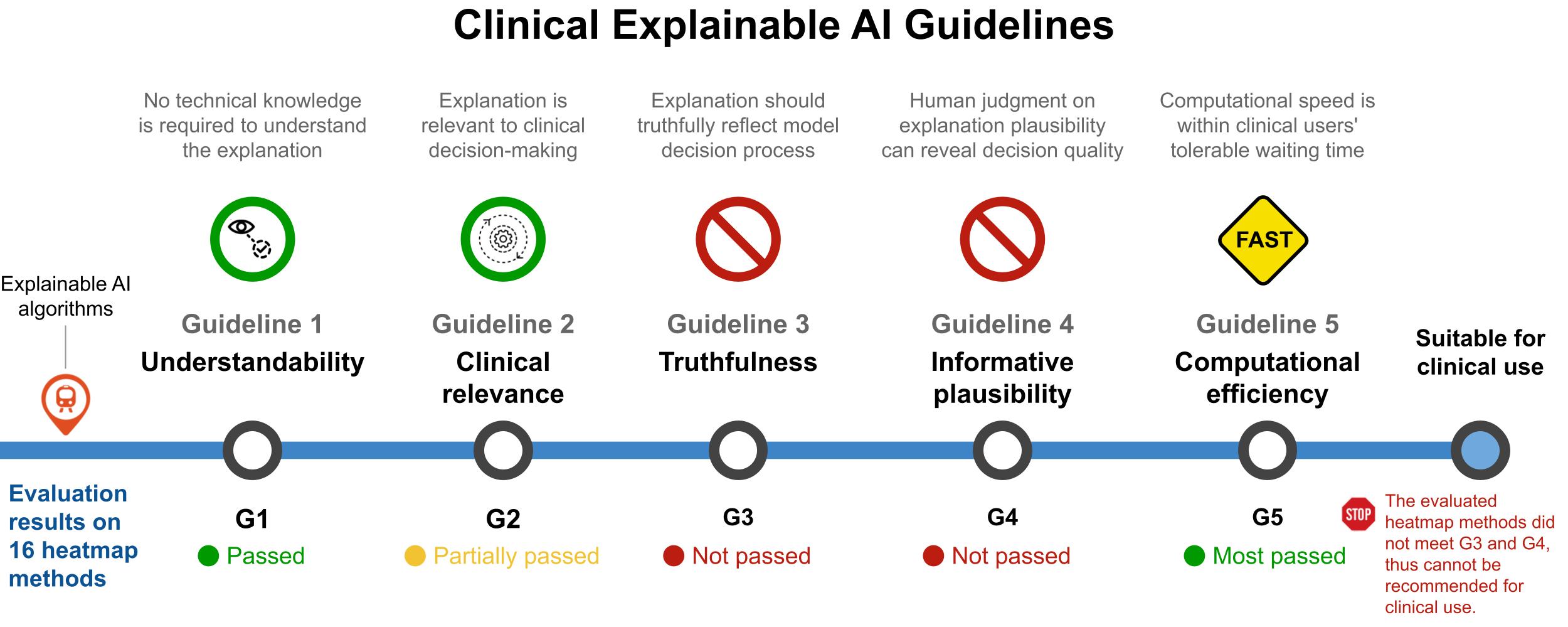

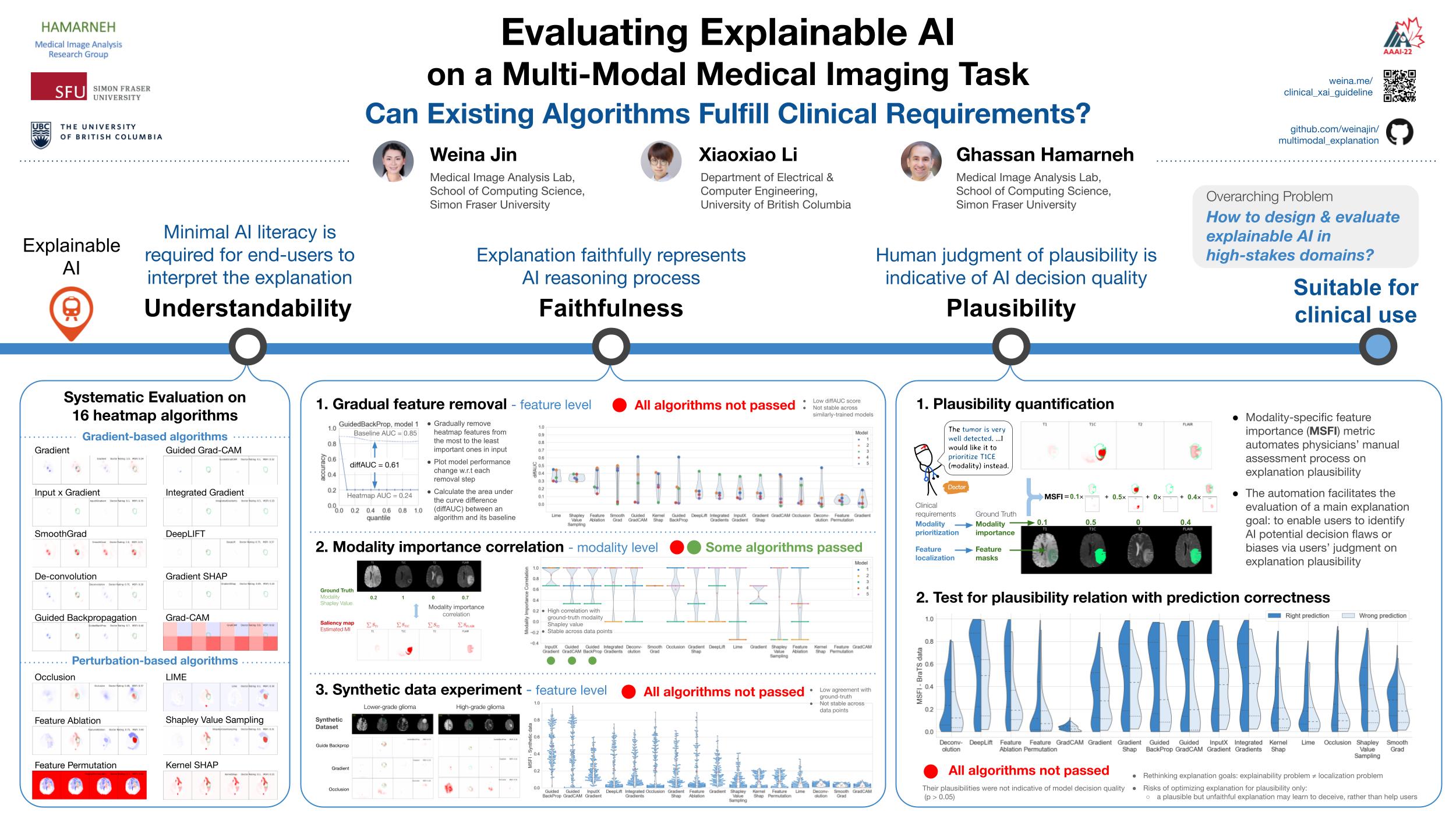

In my research, I propose the Clinical explainable AI Guidelines based on a systematic evaluation with existing explainable AI algorithms (AAAI22, ICML21 workshop), and a user study with neurosurgeons. The guidelines provide well-specified user-centered requirements and evaluation criteria to develop explainable AI for clinical tasks, and potentially could be extended to other high-stakes domains.

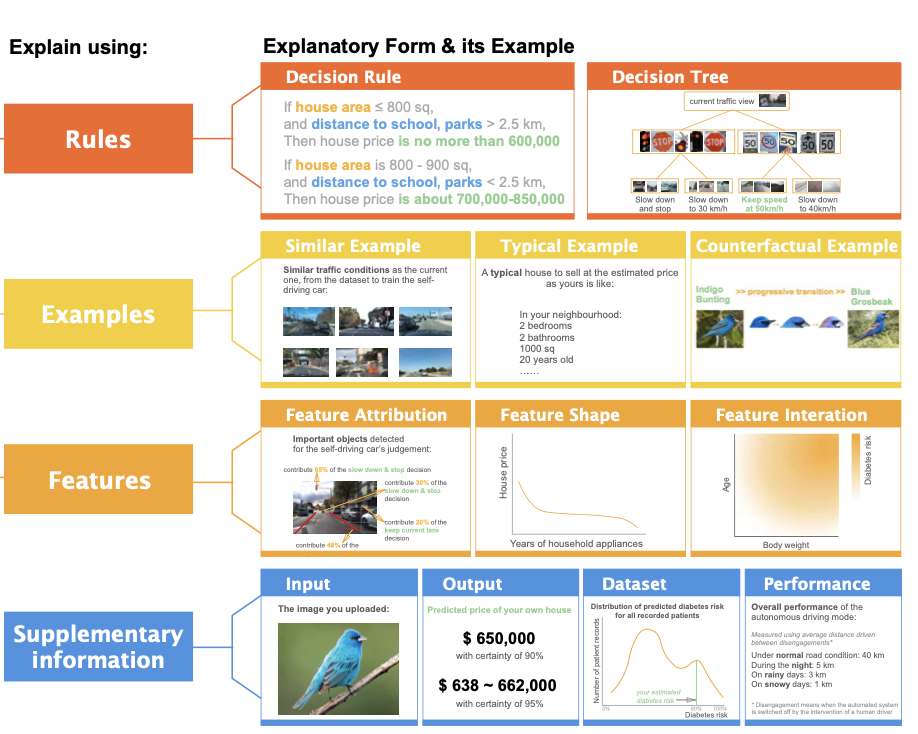

The EUCA framework is another tool to support the development of end-user-centered explainable AI. It provides fine-grained design suggestions for feature-, example-, and rule-based explanations grounded in end-users’ perspectives in my user study. Another work built upon EUCA conducted clinical user requirement analysis for explainable AI via a user study with 25 physicians including radiologists, pathologists, dermatologists, neurologists, etc.

A 3-min video introduction of my research projects:

News

| Jun 28, 2022 | I am awarded as one out of ten fellows for the Borealis AI Global Fellowship of $10,000. Media report from Borealis AI: The Borealis AI 2021-2022 Fellowships: Inspiring Canada’s AI Research Talent |

|---|---|

| Jun 20, 2022 | Co-organize the Interpretable ML in Healthcare workshop at ICML22. |

| Dec 9, 2021 | Our work on evaluating XAI for medical image task is accepted at AAAI 2022. |

Selected publications

Weina Jin is a Computer Science Ph.D. student at the Medical Image Analysis Lab, Simon Fraser University, supervised by Professor Ghassan Hamarneh. Weina received her Doctor of Medicine degree from Peking University, and underwent neurological residency training at Peking University First Hospital. She also received research training in human-computer interaction at Simon Fraser University. Weina's research combines her multidisciplinary research experience in medicine, machine learning, and human-computer interaction, and focuses on developing physician-centered explainable artificial intelligence for clinical decision-support. She uses a human-centered approach in the technical development and evaluation to make AI more clinically applicable. Her work has published in AAAI 2022, and received the best poster presentation award in IEEE VIS 2019. She is also the recipient of Borealis AI Global Fellowship 2022.